ScyllaDB is one of those products that's genuinely better on the technical merits—a high-performance NoSQL database that consistently outperforms Cassandra and DynamoDB in real-world benchmarks. But technical superiority doesn't guarantee developer discovery, especially when AI agents are increasingly mediating how developers find and evaluate tools.

We've been exploring the rise of AEO over SEO and why AI agents won't read your docs. But theory only goes so far. We needed to build something real.

So we built an MCP server for ScyllaDB. Not because they asked us to (they didn't), but because it's exactly the kind of product that deserves better AI-native distribution—technically excellent, with a strong developer community, but potentially invisible to agents that can't programmatically access it.

Why ScyllaDB?

ScyllaDB is a perfect test case for AI-native distribution for several reasons:

- Complex evaluation criteria: Developers choosing a database need to understand performance characteristics, data modeling patterns, and operational requirements

- High switching costs: Once you've committed to a database, you're locked in. The evaluation phase is critical

- Technical depth: You can't fake database expertise. Either the AI agent understands distributed systems or it doesn't

- Competitive landscape: ScyllaDB competes with well-known alternatives. If AI agents don't know about it, developers won't either

What the MCP Server Does

The scylladb-mcp-server isn't just a ScyllaDB wrapper—it's a multi-database comparison platform that lets AI agents perform side-by-side evaluations across competing solutions. More importantly, it gives developers the tools to validate agent recommendations with real data.

When an agent suggests "use ScyllaDB for this workload," developers can use the same MCP tools to verify that recommendation—running their own benchmarks, testing their own queries, and making informed decisions based on evidence rather than taking the agent's word for it.

Database Comparison: ScyllaDB vs DynamoDB

The MCP server connects to both ScyllaDB and Amazon DynamoDB, enabling agents to:

Side-by-Side Queries: Run identical queries against both databases and compare latency, throughput, and consistency

Pricing Analysis: Calculate real costs based on actual workload patterns—not theoretical pricing calculators

Workload-Specific Advice: Get recommendations tailored to time-series, IoT, user sessions, or other specific use cases

Migration Assessment: Understand schema differences, query translation, and migration complexity

Vector Database Comparison: Pinecone vs ScyllaDB Vector

With the recent launch of ScyllaDB's cloud vector database, the MCP server also enables vector search comparisons against Pinecone:

Embedding Performance: Compare indexing speed, query latency, and recall accuracy

Hybrid Search: Test combinations of vector similarity and traditional filtering

Cost Modeling: Evaluate pricing at different scales and query volumes

Four Ready-to-Deploy Demo Applications

Rather than abstract benchmarks, the MCP server includes four complete demo applications that agents can instantly deploy on either database for real-world comparison:

- IoT Time-Series: Sensor data ingestion with time-windowed aggregations

- User Session Store: High-velocity reads/writes with TTL-based expiration

- Product Catalog: Complex queries with secondary indexes and materialized views

- Real-Time Analytics: Event streaming with incremental aggregation

Each demo comes with realistic data generators, so agents can populate both databases with identical datasets and run true apples-to-apples comparisons.

Core Capabilities

Schema Operations: Create keyspaces, tables, indexes, and materialized views on any connected database

Data Operations: Insert, query, update, and delete with full CQL and DynamoDB API support

Performance Analysis: Run EXPLAIN queries, analyze execution plans, identify bottlenecks

Cluster Introspection: Examine topology, node health, replication status, and capacity metrics

The Developer Experience Transformation

Before: Documentation-Centric Discovery

A developer evaluating ScyllaDB would traditionally:

- Search "ScyllaDB vs Cassandra" or "high performance NoSQL database"

- Land on ScyllaDB's documentation site

- Read the getting started guide

- Set up a local instance (20-30 minutes)

- Copy example code from docs

- Encounter errors, return to docs

- Eventually get something working (2-4 hours)

- Decide if it's worth continuing

Total time to meaningful evaluation: half a day to several days.

After: Agent-Mediated Discovery

With the MCP server, the same developer can:

- Ask Claude: "I need a high-performance database for time-series IoT data with sub-millisecond reads. Compare ScyllaDB and DynamoDB for my use case."

- Claude connects to both databases via the MCP server

- Agent deploys the IoT Time-Series demo app to both environments

- Agent generates identical sample data (1M sensor readings) on each

- Agent runs identical query patterns and captures latency percentiles

- Agent calculates projected monthly costs based on the workload

- Developer receives a comparison report with working code for their preferred choice

Total time to meaningful, comparative evaluation: under 5 minutes.

This isn't a simplified demo—it's a real competitive evaluation that would traditionally take a team days or weeks to set up manually.

The Compression of Time-to-Value

Developer discovery has evolved through distinct eras—from documentation and books (weeks to adopt), to Google and Stack Overflow (days), to GitHub and social proof (hours). We're now in the agentic era, where AI assistants mediate the entire process in minutes.

1995: Read the book → 4-6 weeks to production

2010: Google it → 1-2 weeks to production

2020: Copy from Stack Overflow → 1-3 days to production

2026: Ask the agent → minutes to working prototype

The gatekeeper has shifted from publishers to search algorithms to community momentum—and now MCP accessibility is becoming an important complement. Products that are easy for agents to access have an advantage in this growing discovery channel.

The Classic Developer Journey (And How It's Evolving)

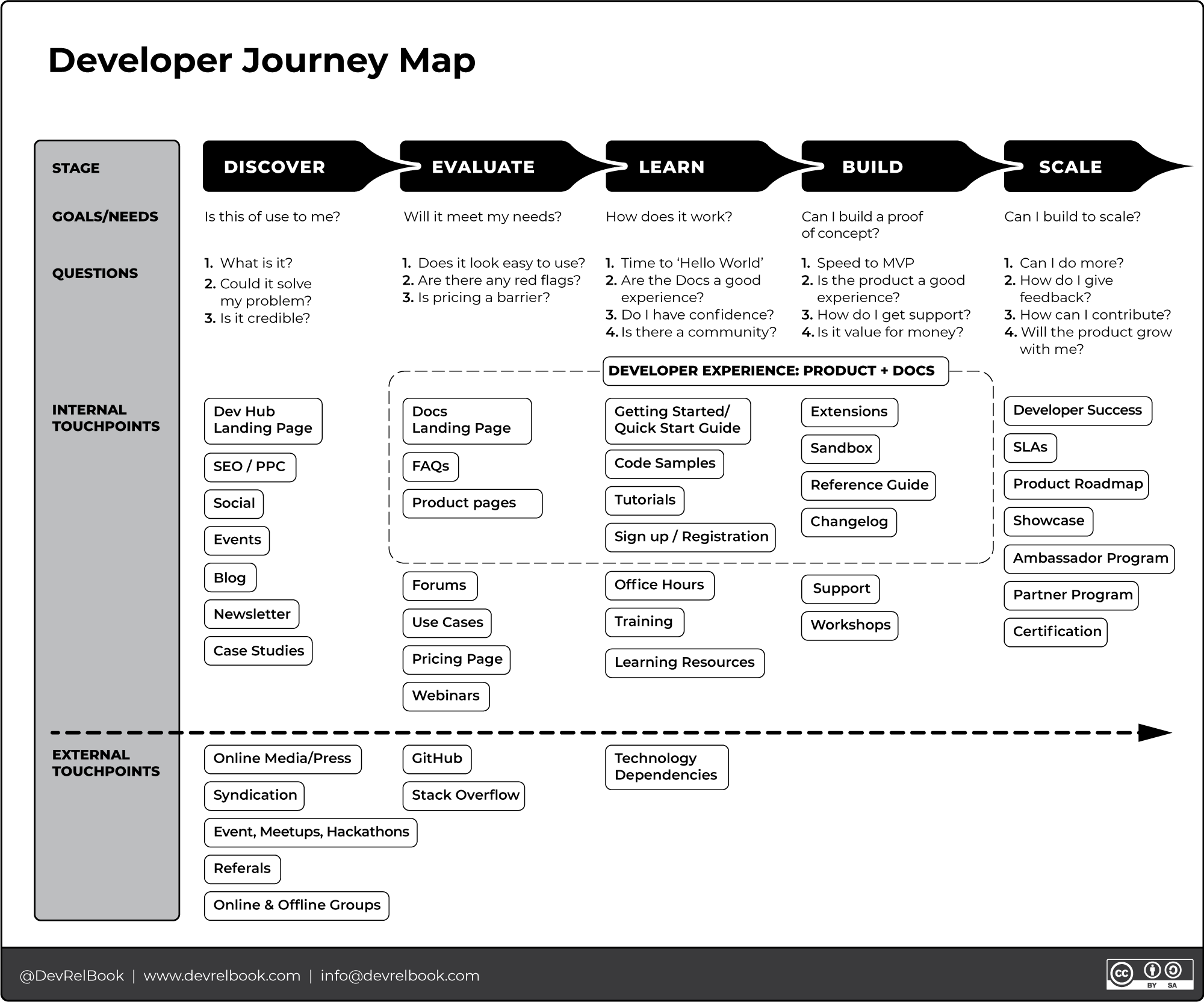

Before we can understand the agentic transformation, we need to acknowledge the foundational work that defined how we think about developer experience. Caroline Lewko's Developer Journey Map is arguably the most influential framework in modern DevRel. Her work—synthesized in Developer Relations: How to Build and Grow a Successful Developer Program—has shaped how hundreds of companies approach developer experience.

We've been using her diagram as a canonical reference for years. It's essentially the industry's gold standard for mapping developer journeys—recognizing that adoption isn't a single moment but a journey with distinct stages, each with its own goals, questions, and touchpoints. Her framework gave DevRel teams a shared language and a systematic approach to optimizing the entire developer lifecycle.

The framework defines five stages that every developer moves through:

1. Discover: "Is this of use to me?" — What is it? Could it solve my problem? Is it credible?

2. Evaluate: "Will it meet my needs?" — Does it look easy to use? Are there red flags? Is pricing a barrier?

3. Learn: "How does it work?" — Time to Hello World? Are the docs good? Is there a community?

4. Build: "Can I build a proof of concept?" — Speed to MVP? How do I get support? Is it value for money?

5. Scale: "Can I build to scale?" — Can I do more? How can I contribute? Will the product grow with me?

What makes Lewko's framework so valuable is the detail beneath these stages. She maps dozens of touchpoints—internal (landing pages, docs, tutorials, code samples, sandbox environments, support) and external (GitHub, Stack Overflow, meetups, referrals)—creating a comprehensive blueprint for developer-centric go-to-market strategy. It's rigorous, practical, and has been battle-tested across companies from startups to enterprises.

And it's entirely optimized for human navigation.

Here's the shift: AI agents interact with products differently than humans. They don't browse documentation sites or ask questions on Stack Overflow. They execute tools and return results.

In the agentic era, the Discover, Evaluate, and Learn stages can collapse into a single agent interaction. This doesn't mean traditional developer experience stops mattering—it means MCP servers and executable interfaces become an important complement to your existing docs and tutorials.

The Agentic Technology Adoption Lifecycle

Geoffrey Moore's classic Technology Adoption Lifecycle described how innovations spread through markets: innovators, early adopters, early majority, late majority, laggards. Lewko's Developer Journey Map described how individual developers move through adoption. Both frameworks assumed human decision-makers at every stage.

In the agentic era, we need a new model—one that accounts for AI agents as the primary discovery and evaluation mechanism.

The key stages: Agent Awareness (can agents find you via MCP, llms.txt, or training data?), Agent Evaluation (can they actually run benchmarks and test your product?), Recommendation (surfacing options with working code), and Human Validation (developers verify agent claims with real data).

The critical insight: developers still make the final decision. MCP servers give them the ability to verify what the agent is telling them—running the same queries, seeing the same benchmarks, and validating recommendations with real data. The agent accelerates; the human validates.

How the Classic Journey Transforms

Discover → Agent Awareness: MCP servers and llms.txt complement SEO and landing pages.

Evaluate → Agent Evaluation: Agents run actual benchmarks alongside reading docs.

Learn/Build → Human Validation: Developers validate agent recommendations with real data.

Scale → Agentic Scaling: Deeper MCP tools for monitoring, optimization, and troubleshooting.

What We're Measuring

This isn't just a proof of concept—it's an instrumented experiment in AI agent engagement metrics. We're tracking:

- Tool invocations: Which MCP tools do agents use most frequently?

- Query patterns: What questions are developers actually asking?

- Session depth: How many operations per agent session?

- Completion rates: Do agents successfully complete the developer's intent?

- Error recovery: How do agents handle schema mismatches or query failures?

This is Developer Experience Optimization (DEO) in action—understanding the agentic journey the same way we once obsessed over click paths and time-on-page.

Early Insights

of agent sessions include schema introspection as the first operation—agents want to understand existing structure before suggesting changes

4.2average tools invoked per session, indicating agents are performing multi-step evaluations rather than simple lookups

89%of EXPLAIN queries lead to schema or query optimization suggestions—agents are actively helping developers write better code

A Note on Telemetry

The scylladb-mcp-server includes experimental, opt-in telemetry—and we want to be transparent about what it does and why.

As MCP adoption grows, growth and developer experience teams face a new challenge: understanding how AI agents consume their APIs. Traditional analytics (page views, time on site, funnel conversion) don't capture agentic interactions. When Claude or Cursor uses your MCP server, how do you know what's working and what isn't?

Our telemetry is designed to help answer these questions—unobtrusively and anonymously:

- Anonymous by default: No PII, no user identification, no query content—just aggregate patterns

- Tool-level metrics: Which MCP tools are invoked, in what sequence, and with what outcomes

- Opt-in and configurable: Telemetry can be disabled entirely or scoped to specific metrics

- Open source: The telemetry code is fully visible in the repo—no hidden tracking

This is still experimental. We're learning alongside everyone else what metrics actually matter for agentic developer experience. If you're building MCP servers and want to understand how agents interact with your tools, we'd love to collaborate—reach out or check the repo for implementation details.

The Implications for Database Marketing

If you're marketing a database (or any developer infrastructure), this experiment reveals some important trends:

1. Documentation Alone Isn't Enough Anymore

Documentation written for humans is still valuable—but it's no longer sufficient on its own. AI agents work best with executable examples, schema definitions, and tool interfaces that complement your existing docs. The good news: MCP servers can help developers validate what they read in your documentation with hands-on testing.

2. Competitive Positioning Happens in Real-Time

When a developer asks "What's the best database for my use case?", the AI agent doesn't consult your positioning statement. It runs queries against available MCP servers and compares actual results. If your database isn't MCP-accessible, you're less likely to be in the consideration set.

3. Developer Relations Becomes Agent Relations

The traditional DevRel playbook—conference talks, blog posts, Twitter engagement—doesn't reach AI agents. The new DevRel isn't just about building MCP servers. It's about refactoring your documentation and developer portal into agent-friendly formats—treating your content as a knowledge graph (or ontology, if you prefer) rather than a collection of web pages.

At VoyantIO, we're working on exactly this problem: finding ways to automate the translation of traditional developer content into a "headless" knowledge graph-driven web presence, specifically optimized for agentic understanding. The goal is to make your product surface when agents solve problems—not through SEO tricks, but through genuine semantic accessibility.

We've also been experimenting with what we call the "advisor voice"—training on transcripts from conference talks, workshops, and technical demos to capture how technical founders and sales engineers actually explain their products. It's still early, but the goal is to help agents represent your product with the authentic voice of your most knowledgeable staff, not generic marketing copy.

How to Build Your Own

The scylladb-mcp-server is open source on GitHub and serves as a template for building MCP servers for other infrastructure products. The key architectural decisions:

# Core MCP server structure

class ScyllaDBMCPServer:

def __init__(self, cluster_config):

self.cluster = Cluster(**cluster_config)

self.session = self.cluster.connect()

@mcp_tool("execute_cql")

def execute_cql(self, query: str, params: dict = None):

"""Execute arbitrary CQL with parameterized queries"""

return self.session.execute(query, params)

@mcp_tool("explain_query")

def explain_query(self, query: str):

"""Return query execution plan for optimization"""

return self.session.execute(f"EXPLAIN {query}")

@mcp_tool("describe_schema")

def describe_schema(self, keyspace: str = None):

"""Introspect cluster schema for agent context"""

return self.cluster.metadata.keyspacesThe pattern is straightforward: wrap your product's core operations as MCP tools with clear type signatures and docstrings. The docstrings become the agent's understanding of what each tool does.

What This Means for the Industry

We're at an inflection point. Companies that make their products AI-accessible gain an advantage in reaching the next generation of developers. MCP servers and executable interfaces are becoming an important complement to traditional documentation and developer experience investments.

The ScyllaDB MCP server is just one example. Imagine this pattern applied to every database, every cloud service, every developer tool. That's the future we're building toward.

Want to Make Your Product AI-Discoverable?

We help developer tool companies build MCP servers and implement AI-native distribution strategies.

Schedule a DemoResources

- scylladb-mcp-server on GitHub (unofficial, community project)

- MCP Protocol Specification

- Developer Relations by Caroline Lewko

- MCP Protocol Explained for Marketing Leaders

- The CMO's Guide to Measuring AI Agent Engagement